Une tutorial de codage en style de production NetworKit 11.2.1 pour des analyses de graphes à grande échelle, communautés, noyaux et sparsification

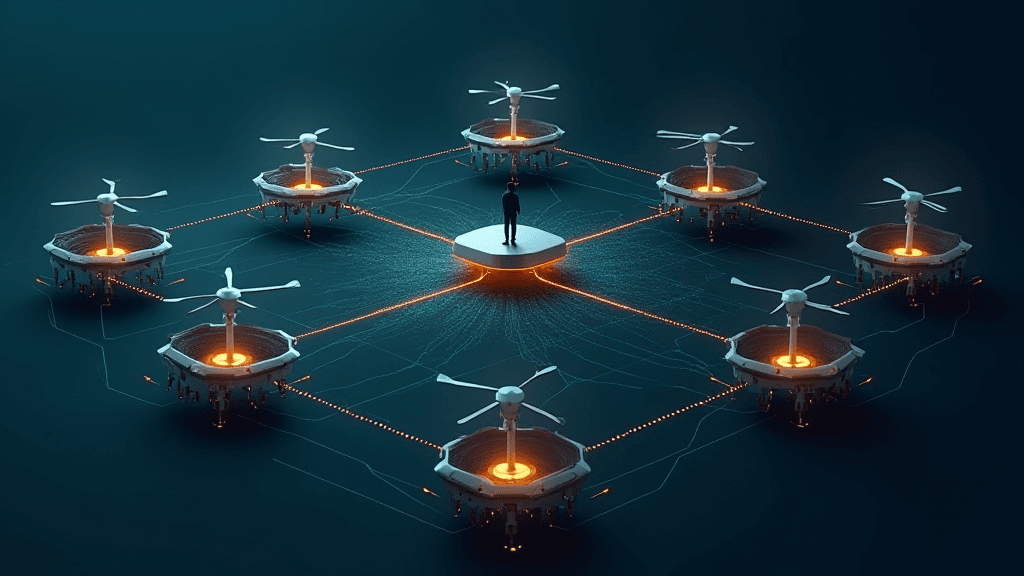

Cette tutorial détaille la mise en œuvre d'un pipeline de traitement de graphes à grande échelle dans NetworKit 11.2.1, mettant l'accent sur la vitesse, l'efficacité mémoire et les API version-sûres. Il génère un grand graphe libre, extrait le composant connexe le plus grand, calcule des signaux structurels via la décomposition en noyau k et le classement de centralité, détecte des communautés avec PLM, évalue la qualité en utilisant la modularité, estime la structure de distance avec des diamètres efficaces et estimés, puis sparsifie le graphe pour réduire les coûts tout en préservant les propriétés clés. Le graphe sparsifié est exporté en tant que liste d'arêtes pour réutilisation dans des workflows downstream, benchmarking ou pré-traitement de données pour l'apprentissage automatique sur graphes.

Aucun impact direct - L'article se concentre sur une tutorial de codage pour des analyses de graphes à grande échelle en utilisant NetworKit 11.2.1, sans lien établi avec des entreprises françaises ou européennes spécifiques, des lois (AI Act, RGPD) ou des secteurs directement.

Vu une erreur factuelle dans cet article ? Signalez-la. Toutes les corrections valides sont publiées sur /corrections.